SUMMARY: These three use cases are driven by data science and have impacted significant bottom-line revenue and subscription growth.

3 data science use cases demonstrate news publisher monetization options

Satisfying Audiences Blog | 29 August 2022

By Matt Lindsay, President, and Arvid Tchivzhel, Managing Director, Product of Mather Economics

“85 percent of AI projects will deliver erroneous outcomes due to bias in data, algorithms, or the teams responsible for managing them” — Gartner

In 2016, Gartner published research noting 60% of data science projects fail. Two years later, it updated the estimate to 85%.

With the influx of trained data scientists and data engineers seeking employment — and a growing number of resources to learn data science (from one-week online boot camps to formal graduate programs) — why is a successful application of data science still so elusive?

Certainly, technical roadblocks are a challenge. Data collection, data quality, and building the right model are important and often overlooked by inexperienced data science teams. Equally important, however, is the organization’s ability to clearly define a business use case along with the practical ability for non-technical business stakeholders (such as marketers and newsrooms) to implement solutions within the tech stack.

The combination of these dimensions is key to succeeding and getting ROI from your investment in data science. Here are three use cases, driven by data science, that have impacted significant bottom-line revenue and subscription growth. These examples also demonstrate how publishers can follow key success factors of applied analytics:

- Definition of monetizable use cases led by business stakeholders.

- Data quality through effective data engineering.

- Accurate modelling insights from data science.

- Successful implementation and validated performance from statistically sound A/B testing within the organization’s current technologies and processes.

Use case #1: Intelligent PaywallTM

Mather Economics led the industry in developing models and A/B tests of dynamic metering with homegrown paywalls in 2012. It was not long before the tech stack evolved to include third-party paywall applications, which allowed publishers the ability to target readers with personalized experiences and calls to action.

We have come a long way since choosing your meter level was a decision made once per year!

However, even with the ability to dynamically set myriad business rules for audience and content, publishers still struggle to optimize their paywalls. There is the trade-off between maximizing new conversions while minimizing risk to ad revenue.

With the recent trends in news fatigue, aggressive paywalls that are imprecise may risk establishing engagement from new readers. When to trigger a registration wall instead of a paywall also poses a complex problem: Is it better to force a sales attempt or settle for an e-mail address you can convert later?

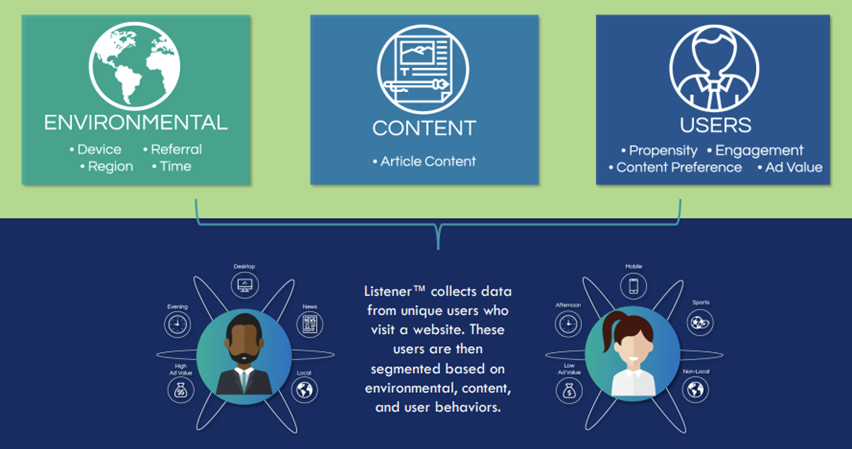

Mather’s Intelligent Paywall™ sits on top of the existing tech stack and applies data science to segment audiences based on their content preferences, engagement levels, geographical location, current relationship with the publisher, propensity to subscribe, expected advertising risk, and many other factors to optimize the customer journeys that best fit an individual reader.

To implement an intelligent paywall strategy, a publisher needs to capture data on the reader’s online activity, augment that data with any first-party data, determine the reader’s propensity to subscribe, and share a segment and user ID (or other actionable signal) with the paywall application.

Effective propensity modelling typically requires sufficient historical data (usually 45-60 days) to observe changes in reader behavior and define other variables for the models.

Intelligent paywalls often generate significant lifts in conversion rates compared to a static paywall. This strategy enables greater engagement with new readers, and it can preserve valuable advertising inventory.

New England Newspapers implemented the intelligent paywall solution on top of Zephr, which increased conversions by 20%.

Use case #2: premium content recommendations

A hybrid content strategy combines premium (also known as subscriber-only) content with metered paywalls and is effective at increasing subscriber conversions. Some audience segments are passionate about a particular topic and will purchase a subscription to read compelling content without following your metered paywall journey.

Data science can predict an article’s propensity to generate subscriptions if set to premium for an audience segment. The “premium flag” on an article can be updated in real time through a communication channel such as Slack (and manually set by the newsroom) or pushed automatically as an actionable signal to a paywall or content management system to avoid human error.

A premium content recommendation engine requires sufficient historical data on prior articles (three months usually offers enough variation and control for the news cycle) to develop accurate predictive models, data on audience segments, and other relevant performance data control for seasonality, promotions, and other factors that affect subscription purchases.

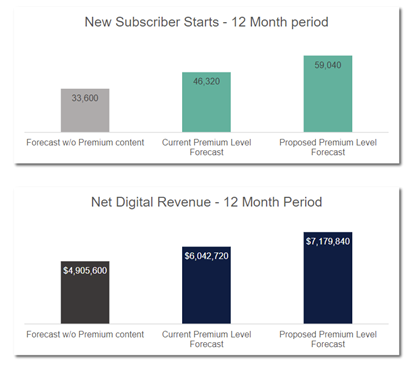

With a premium content engine, one publisher saw a 27% increase in new subscriptions.

One large Midwest publisher in the United States tested the premium content engine and found a significant lift in conversions with minimal impact to pageviews. A 27% lift in new subscriptions was measured and remained consistent throughout the six-month test period. Net digital revenue growth of 19% above budget was forecasted in just the first year. Reporting confirmed zero realized loss to pageviews and advertising.

Use case #3: strategic subscription pricing decisions

There are three important pricing decisions for subscription lifecycle management:

- The acquisition offer.

- The transition from promotional to regular subscription prices.

- Ongoing renewal price increases.

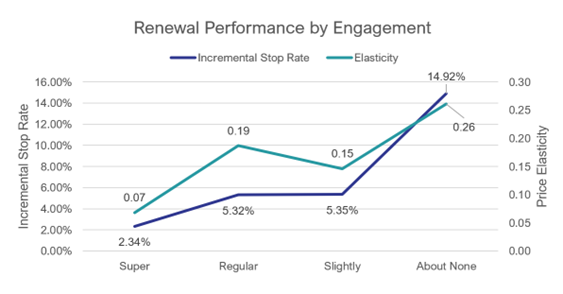

Price elasticities at these three pricing decision points vary considerably across customers. Optimising the pricing strategy for different customer segments can increase average prices with minimal incremental churn among customers.

We recently shared case studies on using lifetime value as your North Star for offer optimization at each of these key points in the customer lifecycle. Measuring price elasticity for each of these stages requires transactional data on prior subscribers, digital activity for subscribers, demographic or other first-party data on customers, product information, online sales attempts and offer term/price/discount information, data on the market, and other relevant data for the model.

A pricing recommendation can be pushed to the paywall, online checkout screens, the subscription management system, or billing platform. A/B tests in each system can validate the price response is consistent with predicted results.

Hundreds of publishers are implementing a pricing algorithm on digital subscribers, incorporating digital engagement data from Mather’s Listener technology into each pricing decision.

In this case study, price elasticity was nearly three times lower for digitally engaged subscribers.

High digital engagement versus no digital engagement can cut price stops more than six-fold, allowing a greater price increase on these subscribers. Price elasticity is nearly three times lower for digitally engaged subscribers for the publisher cited in this case study.

Key success factors

These use cases for applied analytics have several common attributes.

First, each use case has a distinct business impact and KPI that directly measures success in revenue, volume, or average revenue per unit (ARPU).

Additionally, they require robust historical data and an ongoing data pipeline, connecting online and offline data updated at least daily so recommendations can be delivered in time for implementation.

Good ETL (extract, transform, load) and data quality require sophisticated data engineering capabilities, an often-underappreciated input to these processes.

Of course, selecting the appropriate analytics approach, model, and post-estimation is key for the intended application of the insights. Find data scientists who grasp the business model, subscription relationships, levers that will be used to implement and test, and make sure they understand the nature of the data available. Hiring the right data scientists can save months (or years) of development time and cost.

Nuances of markets, products, and audiences deserve careful consideration during the modeling phase. Updates to models will be needed as market conditions, products, and audiences evolve.

Without effective implementation in the existing tech stack and processes, the data science and use case will not be monetized. Knowing what is possible within a publisher’s current tech stack enables maximizing the performance of the use case under any technical constraints.

The business processes in place also affect the implementation options available. Knowledge of potential business improvement to changing technologies or processes may provide the financial rationale for these investments.

A plan to measure and A/B test the implementation should be designed early in the scoping of your use case. Leading publishers ensure tests are statistically valid with closed-loop performance reporting where testing results are incorporated into subsequent modeling and recommendations.

We hope you find these use cases and key success factors helpful in your ongoing analytics journey. Please contact us at matt@mathereconomics.com and arvid@mathereconomics.com to learn more!

2022 Subscription Trends, Benchmarks, and Predictions

View article.